Part 1: Image Alignment

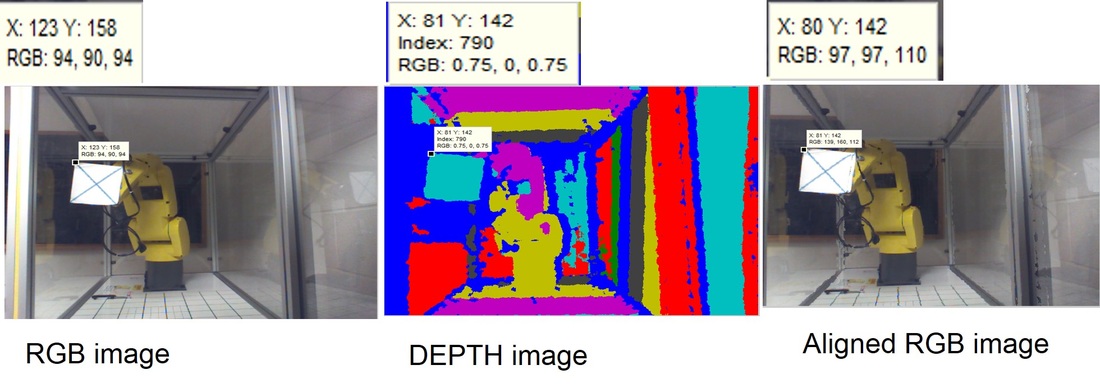

By using Kinect camera to generate RGB and DEPTH image simultaneously, we can align RGB image to DEPTH image, and as a result, any point in the aligned RGB image contains 3-D information( X,Y, and DEPTH information).

The first image is a RGB image before alignment, and the second one is a depth image. After aligning RGB image by take reference to depth image, we get the aligned RGB image which is shown in the right most picture below.

By using Kinect camera to generate RGB and DEPTH image simultaneously, we can align RGB image to DEPTH image, and as a result, any point in the aligned RGB image contains 3-D information( X,Y, and DEPTH information).

The first image is a RGB image before alignment, and the second one is a depth image. After aligning RGB image by take reference to depth image, we get the aligned RGB image which is shown in the right most picture below.

Part 2: Sampling & Resampling

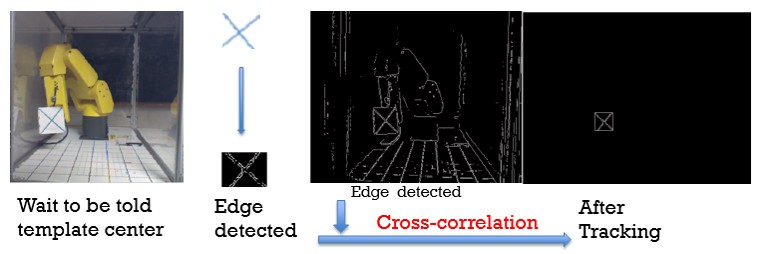

Matlab can automatically sample the target point in a picture and its corresponding point in robotic system. After choosing a template in the RGB image, edge detection is needed. We implement 2-D cross-correlation between target and template and then find the point with highest probability .

The robot will carry a template which is shown in the left most picture above to go over 64 points whose trace consists of a cube.

Sampling is used to generate the coordinate transformation matrix which needs the 3-D information of at least 4 points. 64-points sampling help make the transformation matrix much more accurate.

Matlab can automatically sample the target point in a picture and its corresponding point in robotic system. After choosing a template in the RGB image, edge detection is needed. We implement 2-D cross-correlation between target and template and then find the point with highest probability .

The robot will carry a template which is shown in the left most picture above to go over 64 points whose trace consists of a cube.

Sampling is used to generate the coordinate transformation matrix which needs the 3-D information of at least 4 points. 64-points sampling help make the transformation matrix much more accurate.

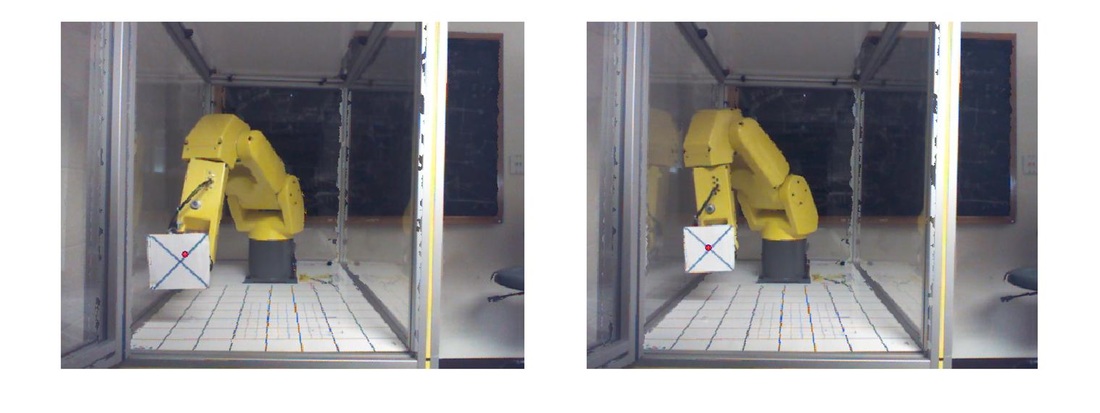

Some time, 2-D cross-correlation introduce some errors. We need to do some processing called "resampling" which help us reduce errors. In this processing, we need to manually checking the tracked center and the real center, and then we need to decide whether we should modify the tracked center.

The left picture below is what tracked and the right below is what modified.

Part 3:Transformation Matrix

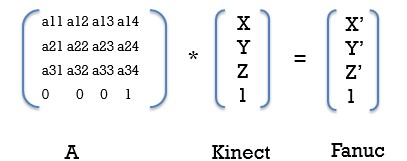

There is a built-in function in Matlab called “absor” which can help us find the transformation matrix with the 3-D pairs which we input to this function. This transformation matrix can help make a one-to-one mapping from a project coordinate to a cartesian coordinate. It means that when we locate a point in a 2-D picture, we can find its real world location in the robotic coordinate.

In the picture below, the X,Y,Z in Kinect present the camera coordinate, and X',Y',Z' in Fanuc present the robotic coordinate.

Part 4: Mapping Error Checking

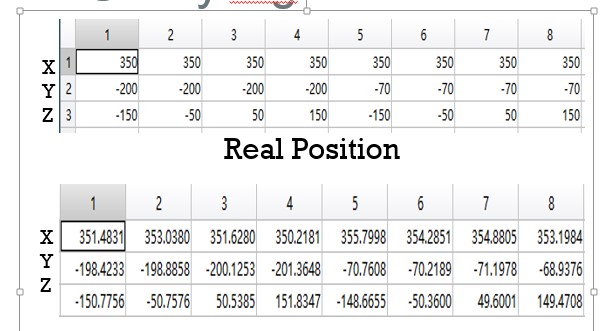

By comparing what we transform from Kinect Camera coordinate to robot coordinate with what we sampled from robot coordinate, we found there existed some tolerable errors. Here is an error estimation obtained from our data. The error is zero-mean. The largest x deviation is 5mm, the largest y deviation is 4.3mm, the largest z deviation is 4.3mm.

By comparing what we transform from Kinect Camera coordinate to robot coordinate with what we sampled from robot coordinate, we found there existed some tolerable errors. Here is an error estimation obtained from our data. The error is zero-mean. The largest x deviation is 5mm, the largest y deviation is 4.3mm, the largest z deviation is 4.3mm.